Prelude: Choosing the best monitoring system for your Solar PV assets – Inverter Bundled, Legacy SCADA builder applications versus a Built for Purpose Solar PV monitoring solution

Remote monitoring, or how the industry loosely also calls it, SCADA (Supervisory Control and data acquisition), promises to transform how we run our plants efficiently, but choosing wisely among the array of choices is super critical since typically a Renewable energy asset has a 25 + years life. Let’s dive in then and see what these options are and what you should go for.

Inverter Bundled Software Platforms

If we were to look at the Solar Monitoring solutions on a price or feature range – low to high, typically a software platform bundled with an inverter or a hardware, is to the far left – generally with the basic monitoring services. Device OEMs (Original equipment manufacturer) like Inverters, Combiner Box, Datalogger, Transformer, Meters provide a web portal for the visualization and reporting of their data, generally as an add-on subscription fee or free; this fee may or may not be high depending on the country you are in and/or the OEM you go with.

The biggest advantage is, it is quick and dirty, comes off the shelf – no thoughts required and typically can go live the moment the plant is charged, and internet connectivity is available. The drawbacks, they usually face the challenge of compatibility & support with other hardware OEM’s architecture and platform. Also, the features are typically limited and don’t allow you to scale or enhance your asset analytics. Any customization is not permitted and may need add-on custom development which may become an expensive affair.

Bundled platforms allow a single operator to monitor many projects, the only constraint being that all hardware will need to be the same make (OEM). Across the world, we have seen several Asset Owners/IPPs over the last 2 decades, very rarely have we seen them having a fleet or a group of assets with the same Inverter (equipment) make or model. Hence, portfolio management through these software may be inefficient and cumbersome.

To summarize, from a rooftop residential owner with one single plant this may make a lot of sense, however for any Asset owner with more than one asset – with multiple OEMs, who would like to enhance performance and manage a portfolio this approach may become a nightmare – It could lead to the problem of managing multiple portals and logins in the very near future.

We need to realize that remote monitoring for these OEMs is not the primary goal, as resources are always a constraint- they have to decide on where to spend the next dollar – Would it be for development of core technology of the hardware product OR spending on R&D of the monitoring system?

The advent of hardware integrated software was to definitely fulfil a growing customer need, but also to get the advantage of real time data of their product performance on-ground (which could help enhance the hardware product) and also to tackle any warranty related issues.

Legacy SCADA

The advent of Industrial process controls started in the early 1960s, and in the mid-1970’s the term SCADA started getting used a lot which was used to allude to the automated control and data acquisition systems.

Open SCADA platforms are compatible with most OEMs; with open communications & connectivity, the system can communicate with PLCs from nearly any OEM, minimizing integration issues. It is on the extreme in the range of customization and it is generally a fully custom-built SCADA system. These systems are made to suit a particular plant or owner’s needs (a single SCADA system is rarely used to monitor and control multiple plants), and the implementation for a given system is not repeatable for another plant.

The make, model, quantity, configuration of equipment required for a SCADA system is unique to a given asset, and hence the configuration, screens and software to interface with the equipment is custom developed for each implementation. This often results in high costs and on-field engineer deployment. It also leads to less transfer of best practices for the larger industry. Support for these systems can be limited as the business model is based on one-time integration and setup fees, typically done by System Integrators and not the OEMs themselves. While most experienced SCADA integrators have been successful, some significant bugs or short-cuts may exist in the delivered solution. The commissioning of a SCADA is very robust which catches configuration errors in the process. It is important to check and ensure the User acceptance test is done by competent personnel to ensure no false data or alarms exist, or any required functionality isn’t missed.

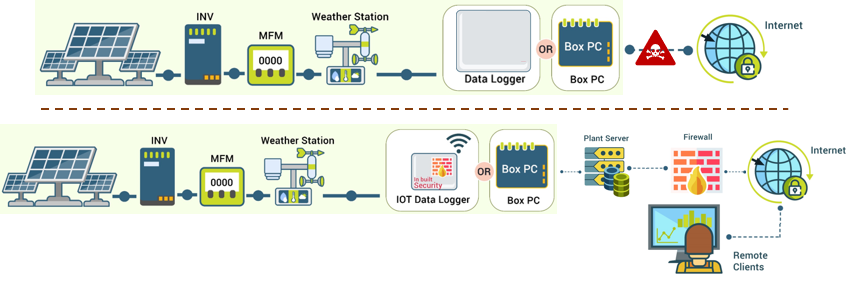

In general, these systems were designed decades ago as stand-alone and isolated systems that focused on strong PLC logic and reliability instead of security against intrusions. With the advent of Industry 4.0, the need to integrate, collaborate, and run analytics on central cloud engines – questions are now out – Will IoT replace the traditional SCADA?

The inability to run deep learning or even first level analytics is a major weakness the Legacy systems are beleaguered with, this is becoming more of a norm rather than a need. With loads of data getting captured, asset owners want meaningful insights and timely predictions, which would allow them to plan & prepare for any situation. e.g., SCADA data when combined with predictive algorithms, can optimize asset health, and produce substantial cost savings.

Last year McKinsey and Company reported ~ 5% loss in the industry overall for equipment effectiveness due to unplanned maintenance. For one chemical plant, using analytics, the plant could cut at least half the time it took to repair pumps, which amounted to about $120,000 in costs avoided per pump failure.

Another reason for Asset owners to consider the new generation remote monitoring systems to get browser (Google chrome, Firefox or Edge) compatibility and mobile readiness which traditionally the legacy SCADAs don’t offer due to inherent deployment being using a .exe (executable program) on the plant server.

Also, most traditional monitoring systems use communications protocols that were developed for humans to communicate to one another via devices. A lot of metadata which is often included in these data packets increases substantially the packet size of the data and hence reducing the computation and bandwidth capability of the system. By moving to an M2M (machine-to-machine) protocol far better efficiency is realized in data transfer and system operations. It also reduces latency of transmission and control ensuring higher responsiveness and safety of the site.

To summarize, for an Asset owner who already has comfort and experience with legacy SCADA, may be due to a thermal plant or hydro plant implementation, in the past it may seem like a good bet to continue installing legacy SCADA for future projects as well, however in order to be mobile ready, portable device ready, to integrate 3rd party tools and analytics, the solution may start limiting the capabilities and output – It could lead to the problem of retro-fitting their legacy SCADA with new age software in the very near future.

We need to realize that the origin of Legacy SCADA was in complex factories and large power plants which were isolated, had different equipment, and SCADA monitoring meant watching all the data through expert operators. But 50 years hence, times have changed – and it is changing every year disrupting through technology as we speak. In renewables where the tariffs are dipping, assets are small, distributed in remote areas, plant control is essential and cyber security is a norm – the need is to move to automated actionable insights from the software rather than depending on human based monitoring using experts – can we really monitor 15 pages per asset – It is humanly impossible – leave alone the risk of human error or incapability.

The key question to ask is , would you like to reinvent the wheel every time you develop screens you think are best, or rely on an Independent Software company which has built for Solar or Wind monitoring through collective on-ground experience of billions of data points and thousands of devices ?

Built-for-Purpose Systems

Built for purpose systems are pervasive across industries. The concept works since businesses are most successful when they have the right tool for the job. A tool that keeps improving, keeps making working easier and that enables decisions. These types of platforms are generally SaaS (Software as a Service) products, hosted on the cloud and have explicit needs that can’t be addressed with general purpose tools.

Typically, they handle huge volumes of data,both in terms of ingesting into the system, and then storing it; which would require an infrastructure to handle such transactions. Every data point has a unique time stamp so the system can understand when something was measured, or when the event happened. The system must interpret and analyze the data in real-time to act while it’s still meaningful; and remove the anomalies and junk at the source.

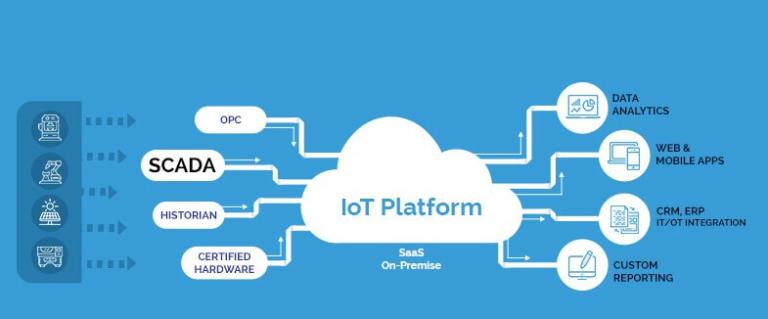

IoT based Scada systems are the best example of such systems, which easily integrate with other hardware, software and networks. The cost of development being spread across many customers, these systems are usually lower cost than traditional SCADA.

Scada & DCS are prevalent industry standards. IoT is complementary to them. Information from SCADA systems acts as a source for IoT. SCADA’s focus is on monitoring and control. IoT’s focus is firmly on analyzing data to improve productivity.

IoT based platforms are customizable, affordable, cyber secure, scalable, highly integratable and have the ability to process data across many sources at a time.

All of the concerns in legacy SCADA and traditional monitoring systems have been major drivers of the adoption of Built for purpose, using Internet of Things (IoT) or similar technologies across other industries. These lessons are similar for the Solar PV industry. Optimizing the transfer of data for PV plants is not simple. Bandwidth requirements are different for every project. If string data is being captured at a particular site, the amount of data can be much larger than a site where only inverters and meters are being monitored; if the data from panel-level available, the amount can be much larger even. To resolve these issues, many platform providers utilize modern Machine-2-Machine protocols like MQTT that help to reduce the size of data allowing for more data to be sent over the same bandwidth.

Most large-scale PV projects are located in remote areas, which do not have ISP coverage or cell service. Local communications interference is also a problem. This interference may be from electrical sources, such as the feedback coming from the inverters, or being blocked by panels.

These concerns can be reduced by using a technology combination within a plant’s network. This can include WiFi, Cellular 2G/3G/4G, Zigbee mesh networks, and even WAN technology. Traditional monitoring providers only use a single technology across all their customers regardless of plant topology & location. These solutions are nontrivial to implement, and it’s only cost effective to choose the most applicable communications technique.

Moving diagnostics to the edge provides additional benefits when used in conjunction with an IoT-based monitoring application. For instance, there are a subset of faults that will always require a site to be disconnected from the grid. By moving to an IoT-based solution using lower-cost edge computer hardware, the latency between fault occurrence and shutdown can be reduced relative to that achieved with a high-cost SCADA system. When edge computing is coupled with machine-learning and cloud-based analytics, PV monitoring systems can become more autonomous, allowing not only automated investigation to the root cause and failure area of fault events, but actions such as technician dispatch or site-level disconnect.

The trend of monitoring system evolution over the past 10 years has been to bring prices down, resulting in a commoditized solution that favors innovations in flashy software features rather than a rethinking of the framework around which a monitoring system is built. By looking to emerging technologies, monitoring providers can challenge these assumptions yielding a lower-cost yet higher-functioning monitoring solution. Such an evolutionary step is now coming to the market in the form of IoT-based solutions, which will enable better efficiencies and lower operational costs in monitoring and managing a PV project.

To summarize, the space between a low-cost, low-touch, manufacturer-based monitoring system and a high-cost, high-touch SCADA system is inhabited by third-party remote monitoring systems. These are generally SaaS products hosted in the cloud, which have few advantages over equipment-direct monitoring.

- Firstly, it allows a single operator to monitor many projects. Secondly, providing monitoring solutions is the primary objective of OEMs. If a monitoring company provides a suboptimal product, there’s little chance for repeat business.

- The advantages over traditional SCADA systems are – Since the cost of development is spread across customers, they are usually cheaper than SCADA, and many are now able to offer the same level of control, like SCADA systems.

- Cloud based remote monitoring systems are more flexible, updated, and scalable. Concerns about security are also there, which in many applications is of grave importance. Only a few offtakers are comfortable with cloud-based control systems due to security concerns. Most utilities have a distrustful mindset of unproven innovation and less likely accept a solution that has not been proven for years or even decades.References

- https://solarbuildermag.com/pv-modules/keep-watch-pv-monitoring-landscape-evolving/

- https://www.windpowerengineering.com/risks-iot-automation-pose-scada-legacy-systems/

- https://iiot-world.com/industrial-iot/connected-industry/internet-of-things-and-scada-is-one-going-to-replace-the-other/

- https://altizon.com/iot-scada-complementary-technologies/

- https://towardsdatascience.com/detecting-real-time-and-unsupervised-anomalies-in-streaming-data-a-starting-point-760a4bacbdf8

- https://www.cio.com/article/3279024/new-purpose-built-platforms-are-ready-to-leverage-the-age-of-instrumentation.html

- https://www.controleng.com/articles/best-practices-in-integrated-hmi-scada-systems-enable-smarter-data-acquisition/